For the past year or more, the transition to hybrid work has been forcibly turbo-charged, and it has brought into sharp focus the challenges enterprises face around how to achieve this goal while maintaining consistent security and performance.

The transition to multi-cloud

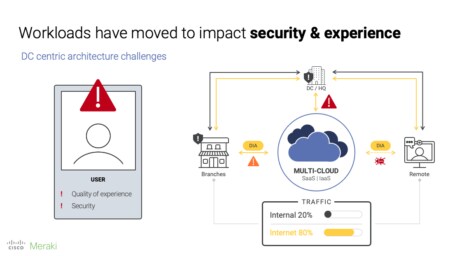

Over the past few years, organizations have been shifting workloads and apps from their data centers to multi-cloud environments. For many organizations, most of their critical traffic is now internet-based, but their continued reliance on data centers—at the heart of traditional backhauled architectures—creates an unnecessary intermediary from a performance perspective. For end users at branch and remote locations, the result is poor quality of experience (QoE) for critical cloud-based workloads and apps that are forced to take a longer route.

Naturally, a solution to improve QoE would be to allow internet-based traffic to bypass the data center altogether with direct internet access (DIA). But what about security? It’s precisely for this reason that many organizations stuck with (and some still do) the traditional hub-and-spoke architecture because of the enterprise security measures in place at the data center.

In parallel but still related, IT professionals have continued to deploy technologies to upgrade infrastructure and/or support new digital initiatives. There are many, often complicated, reasons why but ultimately, most IT organizations run an infrastructure made up of multiple vendors’ technologies. With each added vendor, an IT team’s maintenance, management, and security workload increases. Multi-vendor environments are inevitably siloed with often-complex integrations that yield limited visibility at best. Generally, the only real way to identify a user experiencing poor performance is via each user’s helpdesk ticket.

Fundamentally, this type of complex environment is brittle and slow when challenged to change quickly.

The pandemic amplified everything

The gradual transition to a hybrid workforce happened overnight. IT teams suddenly found themselves supporting users spread across as many locations as there were users.

In addition to simply getting employees connected to the resources they needed, there was the gargantuan challenge of replicating data center or branch security measures outside of those locations. Followed closely after security is QoE—how can IT maximize the performance of critical employee workloads and apps outside the office’s SD-WAN environment?

According to a study by Freeform Dynamics, demand for a streamlined, singular QoE will become paramount, and it looks like this need won’t end with remote work set to continue in some form. A Gartner survey found that 90% of respondents expect to continue allowing remote work, at least part-time, even after most people have been vaccinated against COVID-19.

The journey from here

The ultimate problem that IT organizations are being asked to solve for over the long-term is achieving consistency for security and QoE regardless of an end-user’s location.

This is where SASE comes in, since it looks to deliver exactly this.

SASE, short for Secure Access Service Edge, and pronounced “sassy,” is a label for a consolidated architectural solution that provides effective and homogenous levels of security and experience for users from anywhere (i.e. the office, home, coffee shop, etc.) on any device. In order to achieve this, SASE proposes the convergence of networking and network security functions and their shift toward an as-a-service cloud edge model.

This architecture will be a true journey for enterprises, partners, and vendors that will evolve over time. There are multiple factors that will influence how much of the SASE architecture enterprises choose to implement and when.

Listen on demand as Imran discusses what’s next for SASE on the Meraki Unboxed Podcast, available March 10th.